Photo by Cristiano Firmani on Unsplash

Last summer, I took a photo of a sunset at the beach with my iPhone 15 Pro. The image that appeared on my screen was absolutely breathtaking—vivid oranges bleeding into purples, perfect contrast, skin tones that looked like I'd stepped out of a magazine. When I looked up from my phone, I realized the actual sunset was... fine. Pretty, sure. But nowhere near as dramatic as what my camera had captured. That's when I started asking uncomfortable questions about modern smartphone photography.

The Computational Photography Revolution Nobody Talks About

Your smartphone camera isn't just capturing light anymore. It's making artistic decisions. Engineers at companies like Apple, Google, and Samsung have spent billions developing algorithms that process your photos in real-time, making adjustments that would have taken a professional photographer hours in Photoshop.

Google's computational photography approach is particularly aggressive. When you take a photo on a Pixel phone, the device captures multiple exposures simultaneously—some overexposed, some underexposed—and blends them together. But that's just the start. The phone then applies machine learning models trained on millions of images to identify what's in the frame. Is it a face? Boost the skin tones. A sunset? Increase saturation. Food? Make it look more appetizing. A landscape? Enhance the sky.

The remarkable part? All of this happens in milliseconds, before you even see the image in your gallery. You're not taking photos anymore. You're collaborating with an AI that has strong opinions about what looks good.

When Enhancement Becomes Distortion

This sounds good in theory. Who doesn't want better-looking photos? But there's a creeping problem that nobody wants to acknowledge: these enhancements are becoming increasingly divorced from reality.

Consider portrait mode, which every modern flagship phone offers. The software uses AI to detect faces and blur the background, mimicking the depth-of-field effect from expensive cameras. Except it often gets it wrong. Hair becomes soft and indistinct. Glasses sometimes disappear entirely from the edges. Skin becomes artificially smoothed, erasing freckles and texture that actually make people look like themselves.

A study by researchers at the University of Washington found that when people view photos processed by modern smartphone algorithms, they consistently rate them as more attractive than the actual subjects—even when shown side-by-side comparisons. This creates what some psychologists are calling "reality drift," where our expectations for what humans should look like become calibrated to AI-processed images rather than actual humans.

The environmental implications are equally troubling. When your phone processes a landscape photo, it often boosts greens and blues, making nature look more vibrant and saturated than what your eyes actually see. Photographers have started calling this "Instagram realism"—a version of nature that's more aesthetically pleasing but factually inaccurate. If millions of people are only ever seeing enhanced versions of their natural environment, what does that do to our perception of the world?

The Data Privacy Angle Nobody's Discussing

Here's where it gets genuinely uncomfortable. To train these computational photography systems, companies needed millions of images. Real human faces. Real bodies. Real homes and environments. Much of that training data came from the internet, but some of it came from users themselves.

When you enable cloud backups on your phone, you're not just storing photos. You're feeding a system that learns how to make photos of people like you look better. Or worse. Apple claims their processing happens on-device and they don't keep the training data. Google is less transparent. But all of these companies use your photos—sometimes explicitly with your permission, sometimes buried in terms of service—to improve their algorithms.

The problem deepens when you realize these systems often apply different enhancement standards to different people. A comprehensive analysis by researchers at MIT found that Google's computational photography applied different smoothing levels to faces of different ethnicities, consistently over-processing darker skin tones. Whether intentional or the result of training data bias, the effect was the same: some people's photos were being "corrected" more aggressively than others.

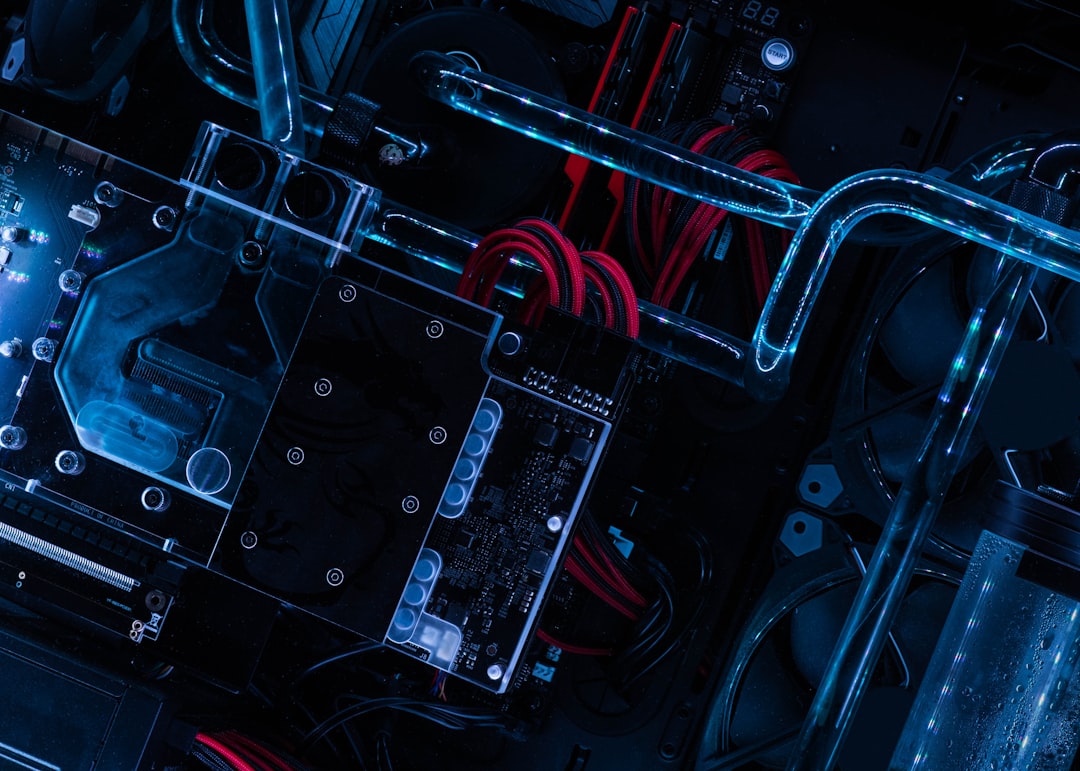

What's Happening Under the Hood

Let's talk about the specific technologies. Most modern phones use something called multi-frame fusion. Your phone takes 3-10 photos in rapid succession when you press the shutter, each with slightly different settings. These are then blended together using computational techniques that would have seemed impossible just five years ago.

Night mode is perhaps the most impressive—and most concerning. When you take a photo in near-darkness, your phone is essentially performing digital magic. It takes a long exposure, applies aggressive noise reduction (which removes detail), boosts colors that may not actually exist in the scene, and enhances edges to make everything look sharp. The result looks like a professional night photography setup, but in reality, your phone is reconstructing what it thinks the scene should look like, not what you actually see.

There's also an entire layer of image processing dedicated to what the industry calls "semantic segmentation." The AI identifies different elements of your photo—sky, face, foliage, water—and applies customized adjustments to each. This is incredibly sophisticated technology. It's also a form of reality manipulation that most people don't even realize is happening.

The Question We Need to Ask Ourselves

Is this a problem? Genuinely, I'm not sure. Photography has always been a form of interpretation rather than pure documentation. Even film cameras had limitations that created a particular aesthetic. Digital cameras introduced their own biases. But smartphones feel different because the processing is invisible and universal.

If you want more insight into how technology companies are quietly changing your experience without your knowledge, check out our article on how your router is secretly obsolete—the same logic applies across the tech ecosystem.

Maybe the real issue is that we've accepted enhancement as the default. When was the last time you saw a smartphone photo that looked "normal"? When enhancement is universal, we've collectively agreed that reality needs improvement.

The next time you take a photo with your smartphone, try this: look at the scene with your naked eye. Really observe it. Then look at the processed result on your screen. Notice the differences. Ask yourself which one is "real." Because increasingly, the answer is: both of them. And neither of them.

Comments (0)

No comments yet. Be the first to share your thoughts!

Sign in to join the conversation.