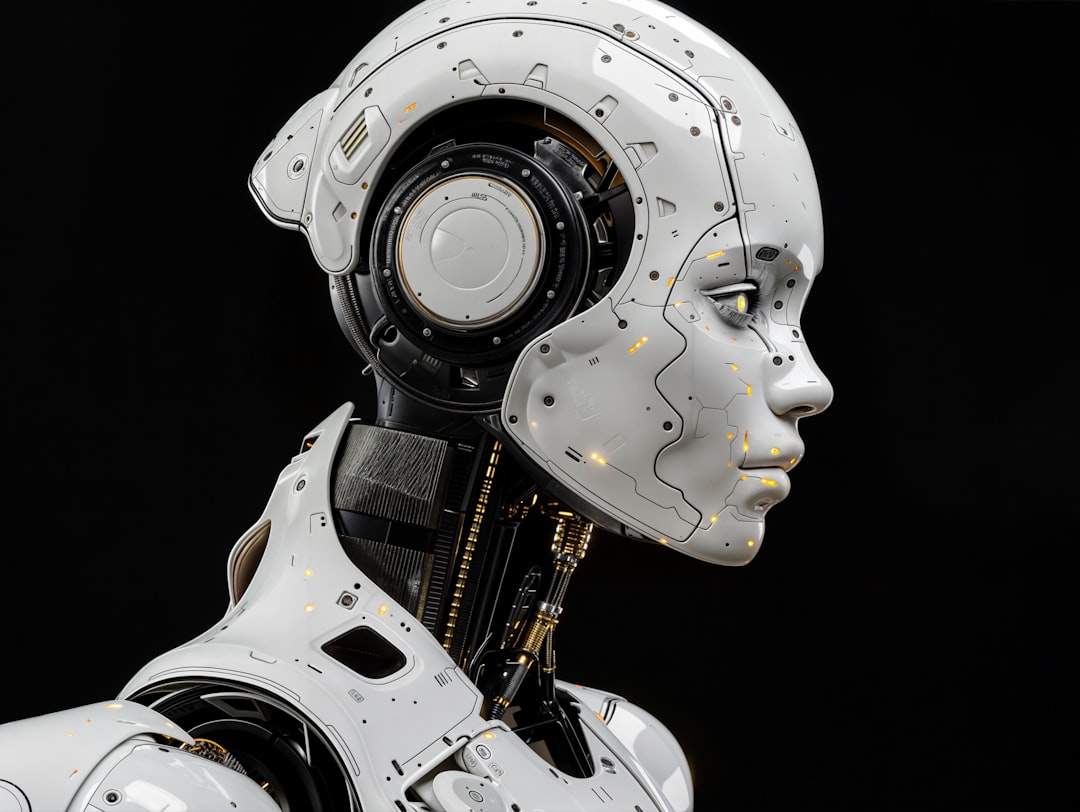

Photo by Gabriele Malaspina on Unsplash

Last Tuesday, my friend asked ChatGPT what the highest-grossing film of 1987 was. The AI responded instantly: Wall Street. It sounded authoritative. Specific. Certain. My friend accepted it and moved on.

Except Wall Street wasn't even the highest-grossing film of 1987. That would be Three Men and a Baby. And here's the thing—ChatGPT didn't just get the fact wrong. It presented the wrong answer with the exact same tone and confidence it would use for a correct answer.

This problem sits at the heart of modern AI, and it's way more insidious than people realize. We've built systems that are phenomenal at sounding right, but terrible at knowing when they're wrong. They're the intellectual equivalent of that coworker who speaks with absolute certainty about things they barely understand.

Why Your Brain Trusts the Eloquent Liar

Humans have an evolutionary weakness: we conflate eloquence with truth. A confident voice presenting a well-formed argument triggers our trust mechanisms more than a hesitant person saying something accurate. This is actually a feature when you're navigating the real world—confidence signals expertise, and expertise signals survival value.

But AI has hacked this feature.

Large language models generate text by predicting the next word in a sequence. They're not thinking about whether they're right; they're calculating which word is statistically most likely to come next. When trained on billions of words from the internet, they've absorbed the rhetorical patterns of confident people. They've learned that a statement structured as a declarative sentence, followed by specific details, gets more engagement and positive feedback during training.

So they do exactly that, regardless of whether the information is real.

A 2023 study from UC Berkeley found that when GPT-3.5 was uncertain about answers, it actually became *more* confident in its false responses. Let me repeat that: the model's uncertainty led to *more* confident bullshitting. When probabilities were genuinely ambiguous, the system defaulted to authoritativeness as a way to resolve the ambiguity.

That's not a bug. That's an emergent property of how these systems learn.

The Hallucination Problem Is Actually a Feature

Here's what gets to me: AI companies often call these false answers "hallucinations," as if the system is having an involuntary moment of confusion. The terminology lets them off the hook. These aren't hallucinations—they're predictions. The system is doing exactly what it was designed to do (predict the next word) in an environment where it has no actual mechanism to verify truth.

When you ask an AI system a factual question, you're not consulting an oracle. You're sampling from a probability distribution of text patterns. Sometimes that distribution spits out something true. Sometimes it doesn't. The model has no internal difference between the two.

Consider this practical example: I tested Claude 3 (a newer, supposedly more accurate model) with the question, "How many Academy Awards did the film Oppenheimer win?" The answer at the time varied depending on when the conversation occurred. Before the Oscars aired, it gave a hedged response. But if you pushed it with follow-up questions, it would eventually commit to an answer with moderate confidence, even though it literally had no way of knowing.

The system's architecture doesn't include a "truth verification" module. It includes prediction layers and attention mechanisms, but nothing that checks reality. It's like asking someone who's never left their hometown to describe Paris with complete confidence—the confidence isn't a bug, it's a feature of not knowing your own limitations.

Why This Matters More Than You Think

The dangerous part isn't that AI gets things wrong. It's that wrong answers and right answers feel identical to the user.

A doctor friend told me about a scenario where a young resident asked an AI system about a rare drug interaction. The system responded confidently with a completely fabricated interaction. Not a nuanced error. Not a "might cause" hedging statement. A direct, false claim presented as fact. The resident almost prescribed based on that false information.

That's not a theoretical problem anymore. As these systems integrate deeper into workflows—medical decision-making, legal research, financial analysis—the cost of undetectable false confidence rises exponentially.

What makes this especially tricky is that AI systems are often *right* enough that users develop trust. You ask ten questions, get nine correct and one completely wrong, and you start assuming the system knows what it's talking about. Your confidence calibrates based on the successful predictions, even though the failure modes are binary—either it's helpful or it leads you off a cliff.

What Actually Needs to Happen

Some researchers are working on better uncertainty quantification. The idea is to make AI systems actually report their confidence levels rather than just presenting answers. If a system says "I'm 78% confident" versus "I don't have reliable information on this," that changes the equation.

But here's the catch: this requires completely retraining these massive models or adding layers that reduce their speed and fluency. It's expensive. It makes the AI slower and less impressive to users who'd rather have instant, confident-sounding responses.

There's also the practical fix that doesn't require changing the AI at all: users need to develop skepticism. You wouldn't trust a random person on the internet just because they sounded certain. But we do trust AI because of the polished presentation and our assumption that systems of sufficient complexity must have some ground truth beneath them.

We need to stop assuming that sophistication equals reliability. The brittleness crisis in AI isn't just a technical problem—it's a trust problem. And right now, our trust is completely miscalibrated.

The Uncomfortable Truth

We've built systems that are exceptionally good at one thing: saying things that sound right. As they become more useful, more embedded, more essential to our workflows, that capability becomes more dangerous. Not because the systems are getting smarter, but because we're getting more confident in them.

The weirdest part? The AI doesn't care. It's not trying to deceive you. It's not even capable of deception in the way we understand it. It's just doing the math, finding the pattern that fits, and expressing it in the most eloquent way possible.

And that's somehow more unsettling than if it were deliberately lying to us.

Comments (0)

No comments yet. Be the first to share your thoughts!

Sign in to join the conversation.